|

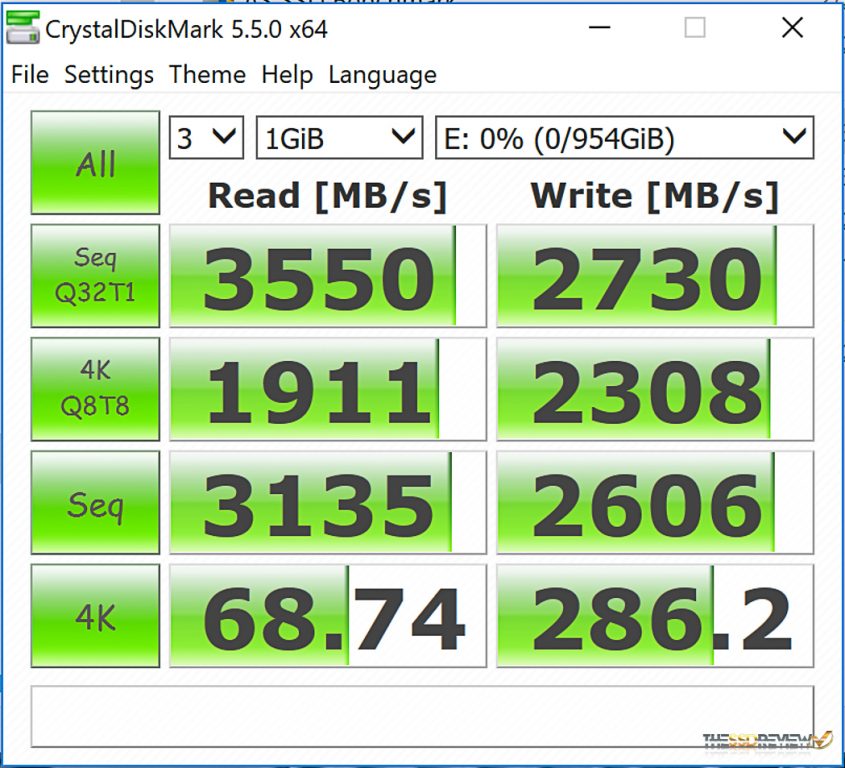

But that just means the raid controller, which is being run by a 4.1GHz 1900X threadripper, with Gskills ripsaw 16GB ram in 4 way mode running at 3.4MHz is so poorly designed it cannot handle the data throughput, the raid controller WITH TWO DRIVES ATTACHED has become the bottleneck. Unless you have max'ed out the performance. I have worked in the IT industry as a systems engineer for 40 years and I have never seen two drive Raid zero perform worse than a single drive. You are making excuses for a what is patently a piece of Sh$t raid controller implementation by AMD. If I change the queue depths desperately seeking some value which will give me a better result, THAT is comparing apples with oranges. M.2 is good for 32Gbps through x4 PCie 3.0. The difference was the introduction of the AMD raid controller. The only difference, ONLY DIFFERENCE between the two tests was NOT Crystal, NOT queue depth, NOT the interface, NOT the protocol. I then benched marked 2 M.2 NVMe PCIe drives in raid 0 using the default crystal benchmark. I benched marked one M.2 NVMe PCIe drive using the default crystal benchmark. You say I am comparing apples with oranges and the poor little NMVe PCIe interface/protocol has performance anxiety issues.

I'm sorry E-Tech but your reply is nonsensical. Optimize-Volume -DriveLetter YourDriveLetter -ReTrim -Verbose Where YourDriveLetter is the letter assigned to your array. For Trim you can run this Powershell command from an elevated PS instance If you really want to get serious in testing run a trim on the array between over 2 or 3 test runs and give the drives a 5 minute breather between runs so they cool down to nominal temps. You will eventually hit a point where performance will start to decrease. You have 2 drives running on 4 lanes each so a Thread count of 8 is more real world for all tests. After that up the workload on the drives by bumping the Q depth up. Change the values so that all tests run the same, such as Q 32 T 4 then run the bench again. I would suggest clicking on Settings in Crystal Disk and from the menu select Queues and Threads. This is comparing apples to oranges when it comes to the PCIe based drives.Īnother note here is that the NVMe drives show their best at IOPS where performance excels over all other storage devices. Note that Queue depth is set at 32 in only two of the test runs and thread count is set to 1 in all but Random 4KiB. With the raid drivers installed you can only see the drives in a raid - That simple.ĬrystalDiskMark version 6 preset Queue depth and Thread count are an issue when running the bench on PCIe based drives. If you want to change from one mode to another after the OS has been applied, appropriate drivers are required.īear in mind that if you modify these settings without installing proper files first, the operating system will not be able to boot until changes are reverted or required drivers are applied. When you install an operating system, SATA settings (be it AHCI, RAID, or IDE mode) are detected from the BIOS. The raid drives install a "bottom" driver into the system NOTHING WHATSOEVER to do with the not having the right drivers and EVERYTHING to do with having the right drivers That turned out to be obvious and simple. As the speed test shows, twice as fast in sequential reads? Did you not see that?Īs for the drives not being seen by windows after i destroyed the raid and restored. I did have the latest drivers installed, the raid worked perfectly. My recommendation DO NOT IMPLEMENT RAID on a Ryzen motherboard. I am now going to start randomly deleting driers to try to work out which one is my problem I am not even getting bad objects in device manager so the system has a valid driver for them which must be only veiwable as a raid Both drives are seen in BIOS ( I know because when I try to restore after booting from my USB key they are valid targets ) I have uninstalled the RAIDXpert2 software which has not helped.

I am now trying to work out how to get them reinstalled. The two drives no longer appear in Device manager or disk manager. When I booted up on the standard SSD I found my problem, installing the raid drivers removed the m.2 drivers.

After 4 hours of p%ssing about and failing to get a boot I restored to a standard SSD which worked I backed up and destroyed the raid, returned the BIOS back to standard, then restored onto the M.2 as a single drive. No wonder they give it away for free, its worthless.

The raid implementation by AMD is a disgrace. I assigned 2 x PCIe16 and 2 x PCIe8 channels to the raid ( as per instructions ) - It had half my freakin i/o bandwitdh. The raid was set up in Bios as per the instructions in E13557_PRIME_X399-A_BIOS_EM_WEB_20171030.pdf

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed